About Me

I'm Amit Raj Reddy Dharam, a Computer Science Master's student at Arizona State University, graduating in May 2026. My coding journey began during my undergrad at BITS Pilani, where I pursued a dual degree in Computer Science and Mathematics. It was there that I discovered my passion for machine learning and AI, diving deep into data structures in C/C++, object-oriented programming in Java, and eventually deep learning with Python.

Over the past few years, I've evolved from writing my first "Hello World" to building production ML systems, winning hackathons, and working as a Machine Learning Engineer at TELUS Digital. Today, I am focused on cloud computing, GenAI applications, and creating AI-powered solutions that make a real impact.

Hobbies & Interests

- Hackathon enthusiast: I love the thrill of building projects under pressure and have won multiple competitions including Sunhacks 2025 and InnovationHacks 2025

- Tinkering with AI projects: from personal smart assistants to computer vision applications

- Cloud computing: deploying scalable systems on AWS

- Continuous learner: always exploring new ML frameworks, tools, and techniques

- Open source contributor: giving back to the community and collaborating on projects that matter

- ASU sports fan: catching Sun Devil games when I can

- Watching anime when not debugging: convinced that the One Piece is real!!

Resume

View my resume to learn more about my experience, skills, and accomplishments.

Education

Arizona State University

August 2024 – May 2026Masters in Computer Science

GPA: 4.00/4.00

Relevant Courses

BITS Pilani, Hyderabad Campus

August 2018 – July 2023Dual Degree: B.E. in Computer Science and M.Sc. in Mathematics

Relevant Courses

Experience

AI Data Science Analyst (Part-Time)

September 2024 - June 2025Enterprise Technology at Arizona State University

Key Achievements

- Implemented the backend for a NL2SQL conversational agent using Python, FastAPI allowing users to query, filter and display data from multi-table databases using conversational inputs, with query execution accuracy over 85%

- Optimized natural language to SQL module responses by incorporating team lead and engineer feedback into prompt engineering, fine-tuned LLMs, and GraphRAG implementation, leading in a 5% increase in accuracy

- Integrated LangChain for fast orchestration and Neo4j for efficient semantic search using embeddings; utilized AWS EKS for scalable inference; and reduced response time by 30% over 100+ questions per session

Software Development Engineer 1 - Machine Learning

July 2023 - June 2024Telus International AI CV

Key Achievements

- Instruction-tuned open-source LLMs with PyTorch on scientific topics using PEFT-based methods for synthetic data creation using a RAG pipeline. Increased manual question generation throughput by 50%, reducing generation time by 40%

- Evaluated a custom trained 3D object detection (Voxel-based) and tracking models(DeepSORT) on the KITTI benchmark, achieving around 90% precision for object detection and 70% MOTA (Multi Object Tracking Accuracy) for tracking

- Mentored an intern to test the LLM against conventional NLP benchmarks (MATH, MMLU) and internal domain-specific datasets, achieving a +6% improvement in benchmark scores and 50% faster evaluation timeframes

Software Development Engineer Intern - Machine Learning

August 2022 - July 2023Telus International AI CV

Key Achievements

- Prototyped DeepSORT and ByteTrack algorithms for bounding box tracking over video sequences resulted in 80% mAP object recognition and 75% IDF1 multi-object tracking accuracy, as confirmed against MOT17 benchmarks

- Improved image segmentation precision by 23% across 15,000+ annotated frames by uptraining U-Net and Mask R-CNN architectures using the CityScapes dataset, reducing manual annotation time from 8 hours to 5 hours per dataset batch

- Deployed ML APIs serving 15+ active projects with sub-200 ms response times by establishing FastAPI and TorchServe endpoints, containerizing the segmentation models with Docker, and hosting on AWS EC2 with JWT authentication

Projects

Hackathon Winners & Academic Projects

Technologies

Key Features

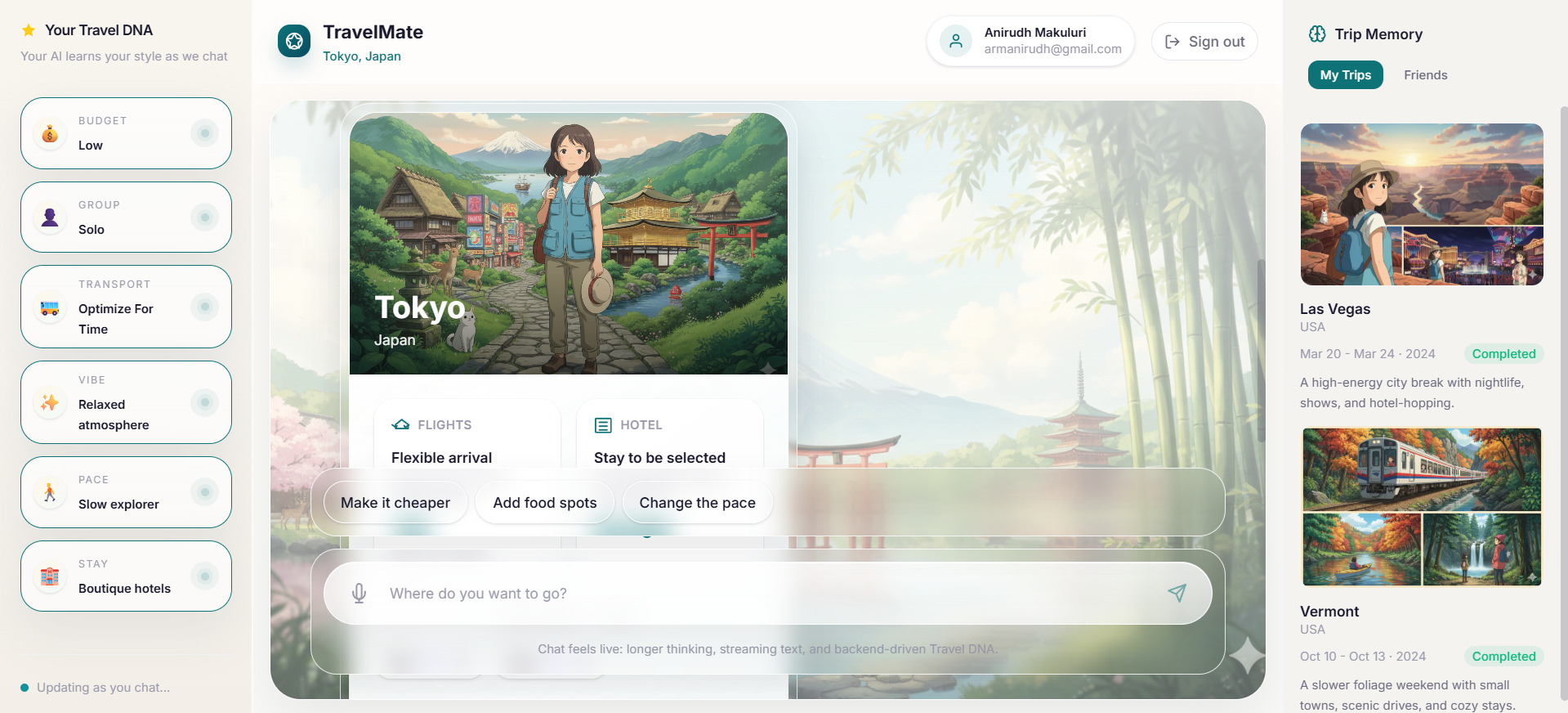

- Created a multi-agent AI travel planner with a Python/FastAPI backend that coordinated specialized agents to make itineraries, check feasibility, and ground the plans in the real world. The whole thing was built and tested on Google Vertex AI and AI Studio, and Google Maps and Weather APIs were used to make practical, day-by-day trip plans.

- Engineered and integrated expressive voice agents that use ElevenLabs' conversational AI capabilities to handle natural language queries on trip itineraries and travel blogs, wired through an MCP-based multi-agent pipeline with a responsive frontend in Next.js, TypeScript, Tailwind CSS, and session-aware auth via Auth0, and deployed on Vercel.

Technologies

Key Features

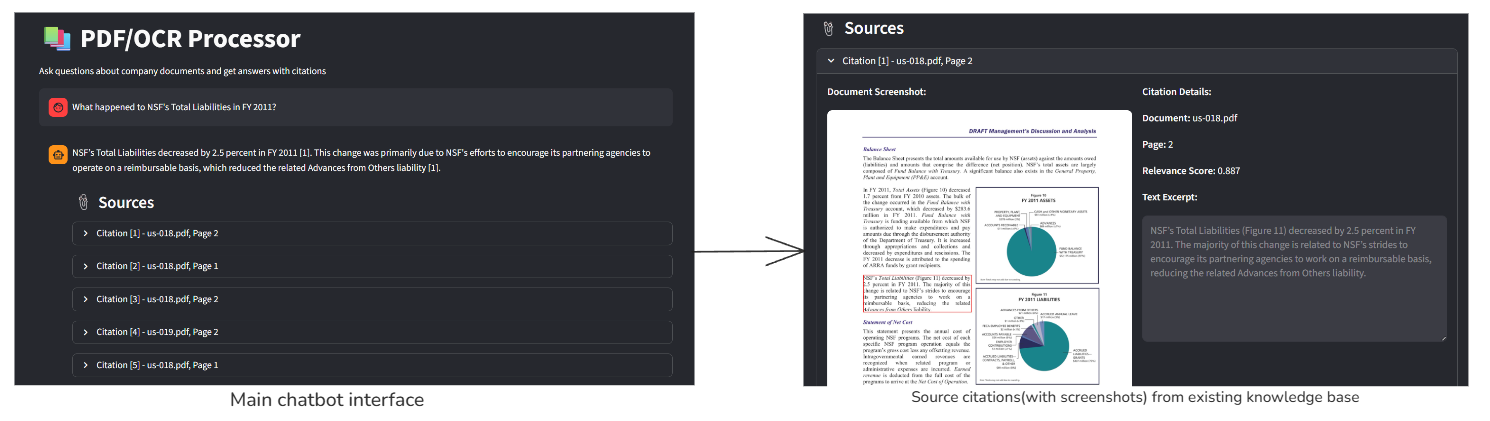

- Built a hybrid RAG pipeline for extracting knowledge from PDFs by combining Neo4j graph traversal, vector similarity, and full-text search. IBM DocLing was used for layout-aware extraction, and local sentence transformers were used for zero-API-call retrieval. Google Gemini handled knowledge triplet extraction and answer generation through a FastAPI backend.

- Made a grounded citation UI with Streamlit that shows the top five answers with PDF screenshot overlays and bounding box highlights. Containerized Neo4j knowledge base in Docker, Docling (IBM) for deep layout parsing, and pdf2image for 300 DPI page rendering.

Technologies

Key Features

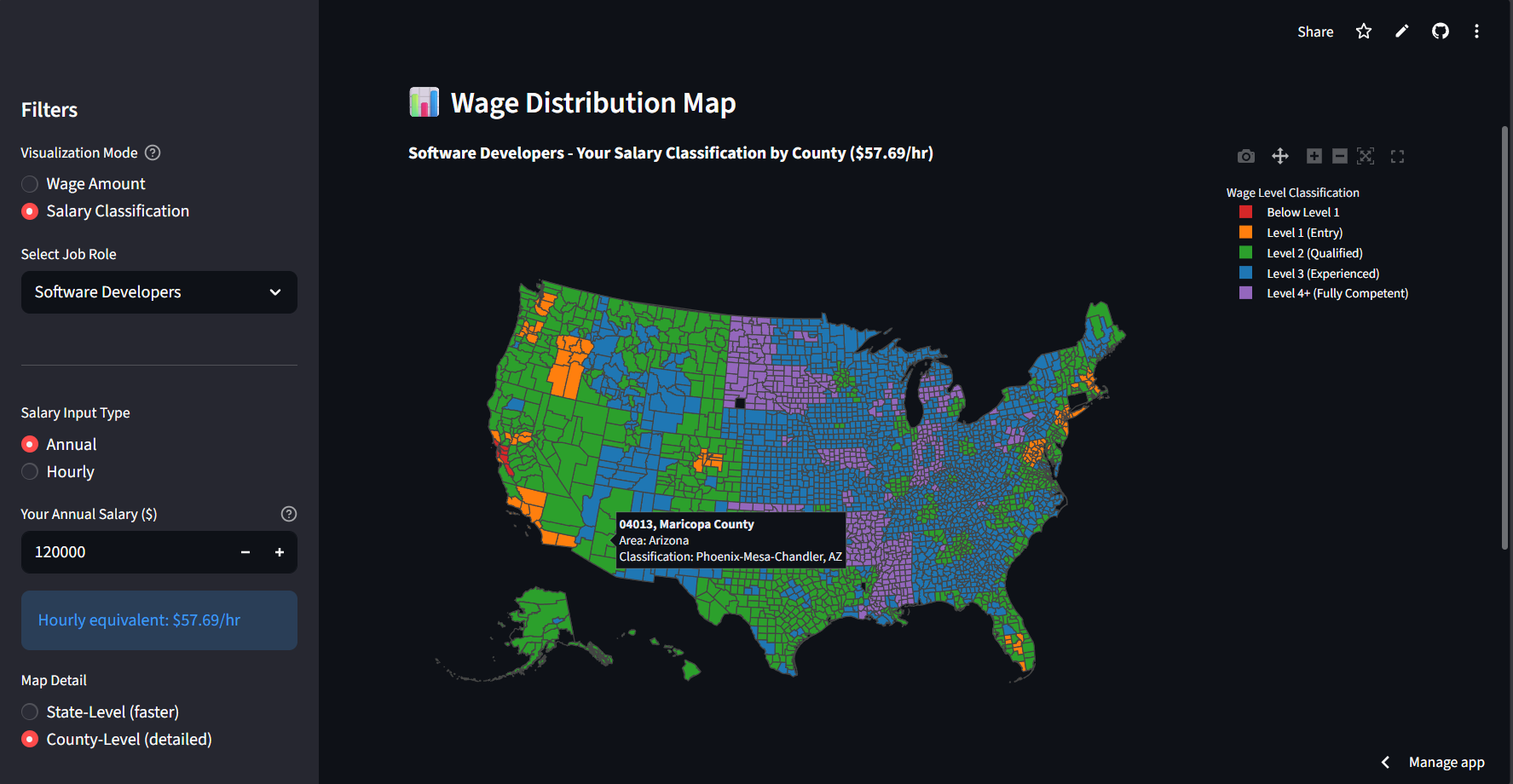

- Developed an interactive H1B wage dashboard that shows U.S. Department of Labor data on the prevailing wage in each state and county. This lets you compare salaries for more than 850 job roles and 4 wage levels. It was built with Python and Streamlit and is now live on Streamlit Cloud.

- Added a salary classification feature to the dashboard that shows how a user's input salary compares to regional wage levels in real time. It shows whether it falls into Level I to IV across any geography, with dynamic stat cards (avg, median, min, max) for each filtered view.

Technologies

Key Features

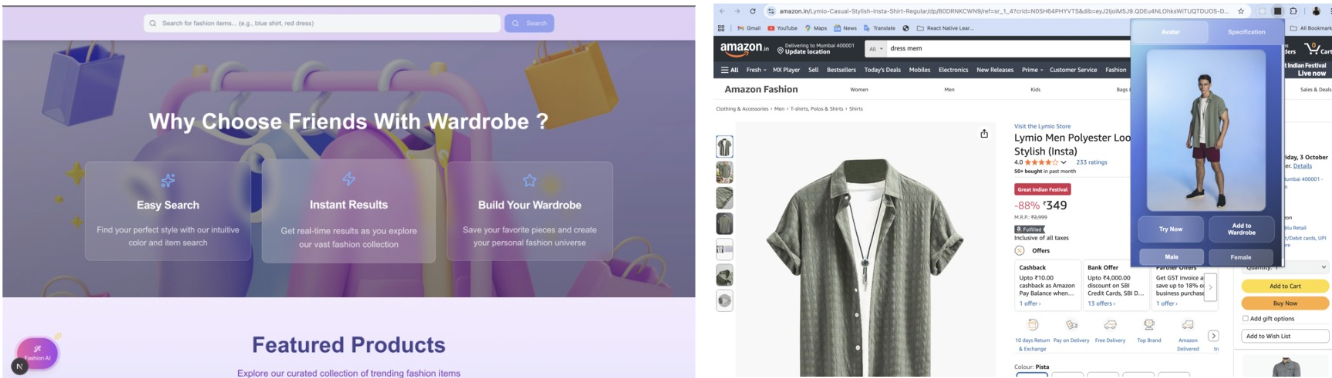

- Made a browser extension for AI-powered virtual try-ons that overlays chosen clothes on a user model in real time and checks for duplicate clothes before the user buys them. The extension uses Google Gemini and Nano Banana for AI fitting, MongoDB for storing wardrobe and preference data, and AWS S3 for safe storage of clothing images. The backend is written in Python and FastAPI, and the frontend is written in React and Next.js.

- Built a chatbot that helps people choose outfits for different occasions (like parties and events) by mixing and matching items from their own wardrobes. It can find duplicates using smart MongoDB queries on color, type, and style. The whole thing is containerized with Docker and sent through a CI/CD pipeline on GCP.

Technologies

Key Features

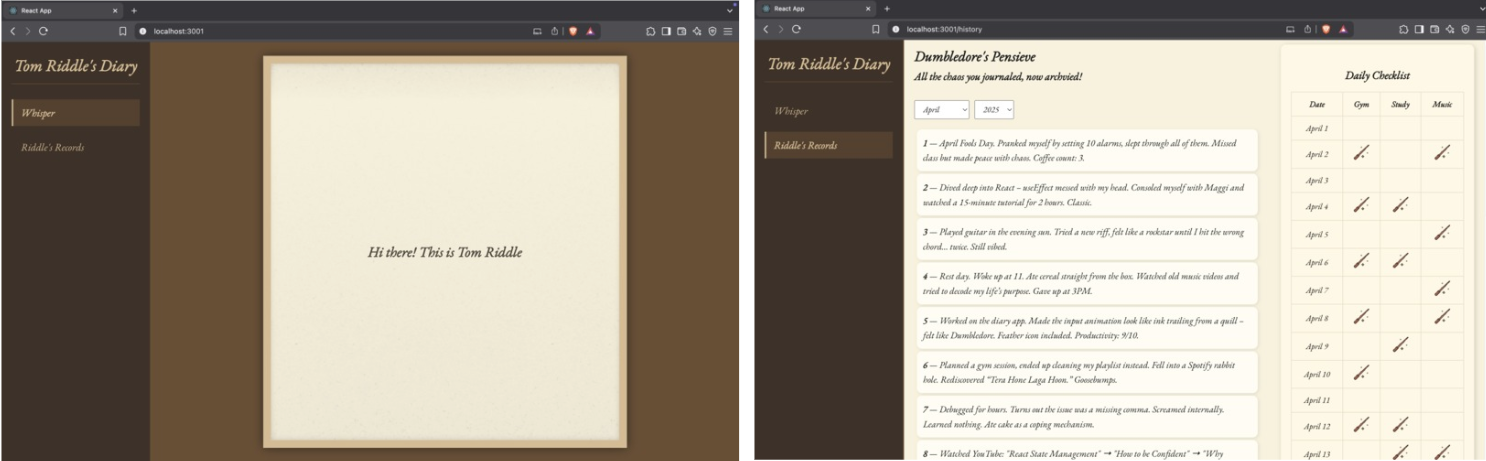

- Designed an AI-powered personal diary agent with long-term conversational memory; users can log thoughts, query past entries by date, and reflect on emotional patterns over time, powered by Google GenAI and PyTorch Transformers for empathetic response generation, with ChromaDB handling semantic search and persistent memory retention via a FastAPI backend.

- Architected a reflective personal dashboard with daily summaries, emotional tone tracking, and habit checkboxes wired through an MCP integration layer for agent tool orchestration, with an immersive React.js frontend featuring a disappearing-input interaction design.

Social Impact & Volunteer Projects

Technologies

Key Features

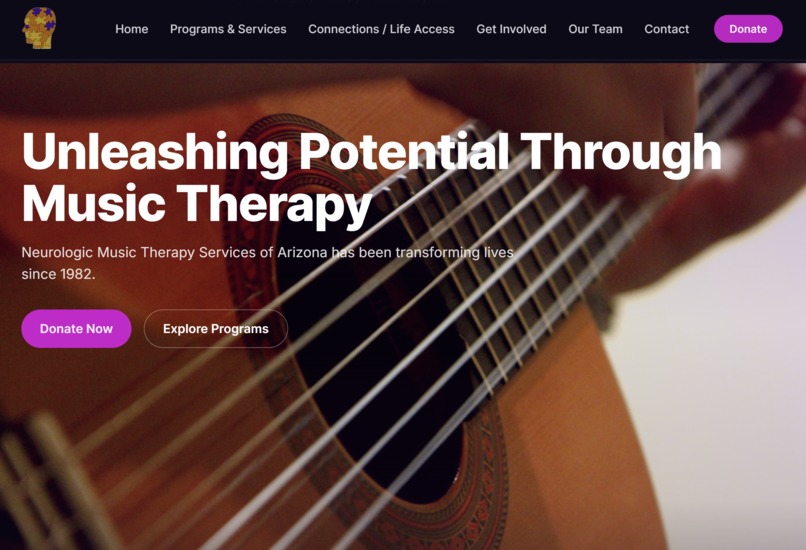

- Our team was inspired by NMTSA mission to make music therapy accessible to everyone in the community. We noticed that their existing site could be more engaging, modern, simpler to work with, and easier to maintain. We wanted to create a new digital experience that reflects the heart of NMTSA as a place of compassion, creativity, and connection through music.

Technologies

Key Features

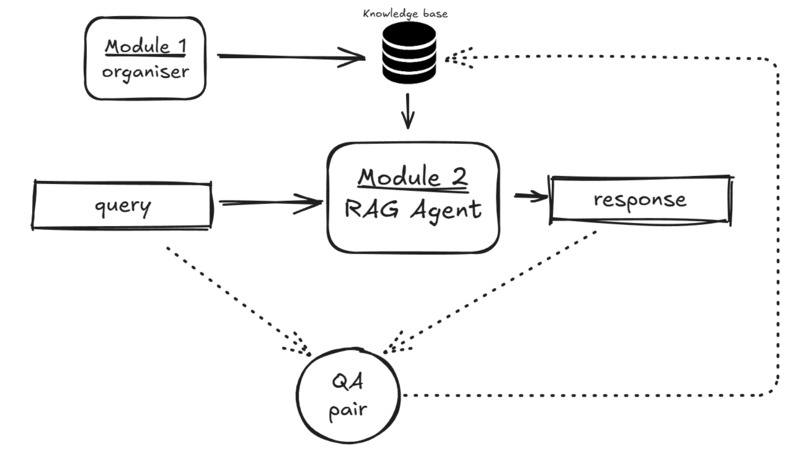

- Our team developed an AI agent with Google Drive using Python, Langchain and RAG to assist in document organization, tagging, and retrieval. Implementing AI-driven document categorization, suggesting reorganization strategies, for enhanced prompting capabilities. The nonprofit organization we developed for is Heritage Square Foundation.

Technologies

Key Features

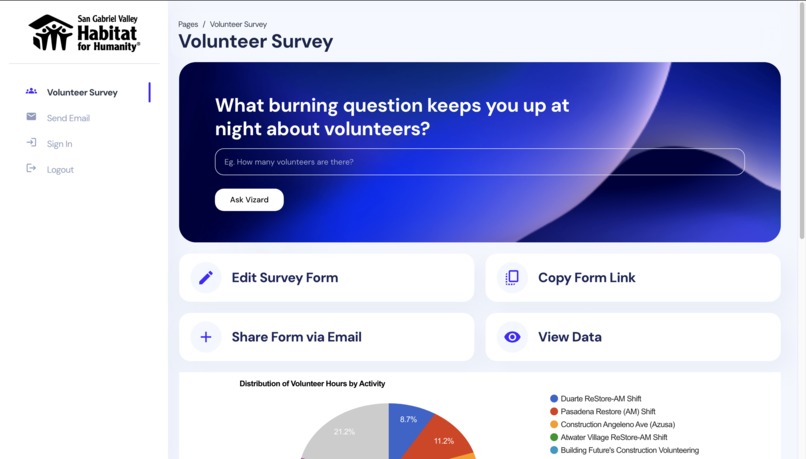

- Built a single CRM platform for San Gabriel Valley Habitat for Humanity that combines survey responses, Excel uploads, and manual inputs into one pipeline with role-based access control and data encryption. It has a Python/Flask backend, a MySQL database, and a React frontend with Google Sheets and Data Studio dashboards that change dynamically.

- Created an NLP-powered chatbot that allows staff to query real-time organizational data in natural language, reducing reporting friction across volunteer, homeowner, and home preservation workflows; built with LLM + NLP tooling; and deployed as a cost-effective solution on free-tier Google infrastructure.